In this case, there is no point in drawing this extra shape.

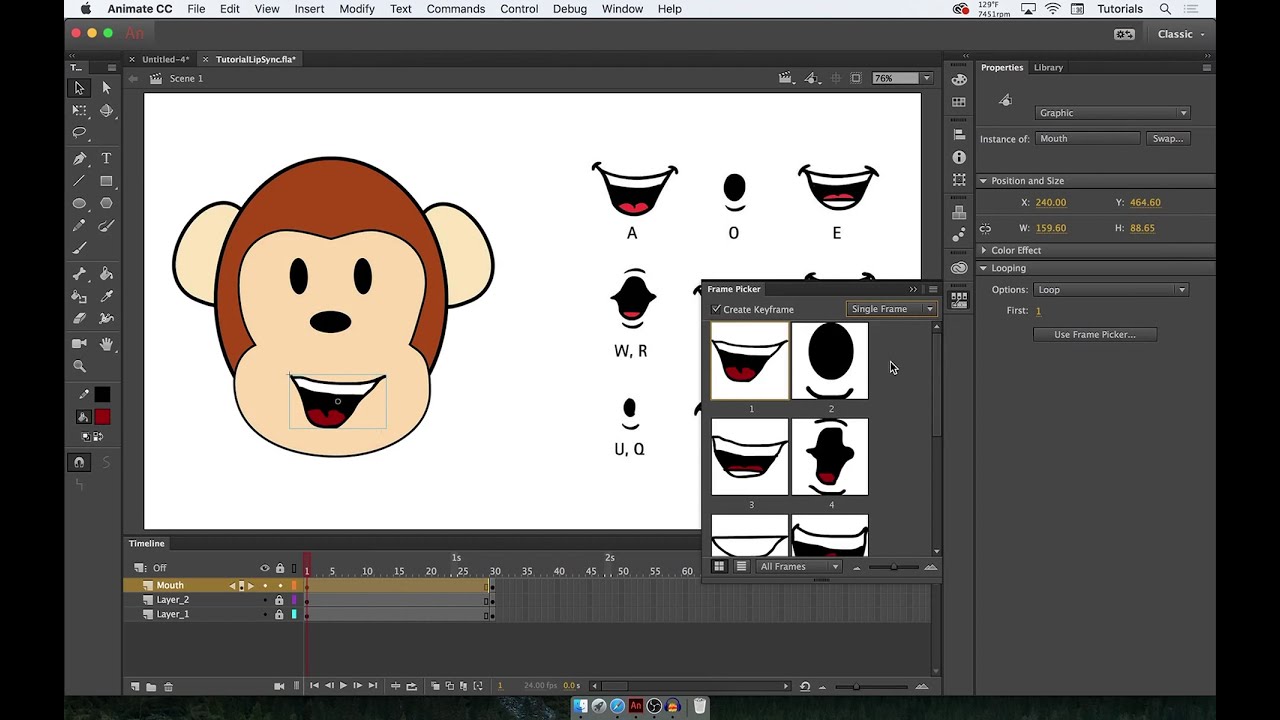

Depending on your art style and the angle of the head, the tongue may not be visible at all. The mouth should be at least far open as in Ⓒ, but not quite as far as in Ⓓ. This shape is used for long “L” sounds, with the tongue raised behind the upper teeth. If you decide not to use it, you can specify so using the extendedShapes option. If your art style is detailed enough, it greatly improves the overall look of the animation. Upper teeth touching the lower lip for “F” as in for and “V” as in very. This mouth shape is used for “UW” as in y ou, “OW” as in sh ow, and “W” as in way. Both ⒸⒺⒻ and ⒹⒺⒻ should result in smooth animation. Make sure the mouth isn’t wider open than for Ⓒ. This shape is also used as an in-between when animating from Ⓒ or Ⓓ to Ⓕ. This mouth shape is used for vowels like “AO” as in off and “ER” as in b ird. This mouth shapes is used for vowels like “AA” as in f ather. So make sure the animations ⒶⒸⒹ and ⒷⒸⒹ look smooth! This shape is also used as an in-between when animating from Ⓐ or Ⓑ to Ⓓ. It’s also used for some consonants, depending on context. This mouth shape is used for vowels like “EH” as in m en and “AE” as in b at. It’s also used for some vowels such as the “EE” sound in b ee. This mouth shape is used for most consonants (“K”, “S”, “T”, etc.). This is almost identical to the Ⓧ shape, but there is ever-so-slight pressure between the lips. You may choose to draw all three of them, pick just one or two, or leave them out entirely.Ĭlosed mouth for the “P”, “B”, and “M” sounds.

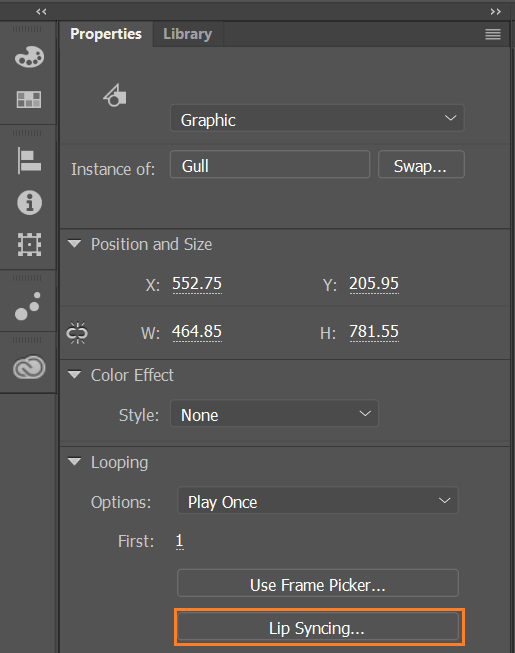

In a paper that has been prepublished on arXiv, the two researchers at Adobe Research and the University of Washington introduced their deep-learning-based interactive system that automatically generates live lip syncing for layered 2-D animated characters.In addition to the six basic mouth shapes, there are three extended mouth shapes: Ⓖ, Ⓗ, and Ⓧ.

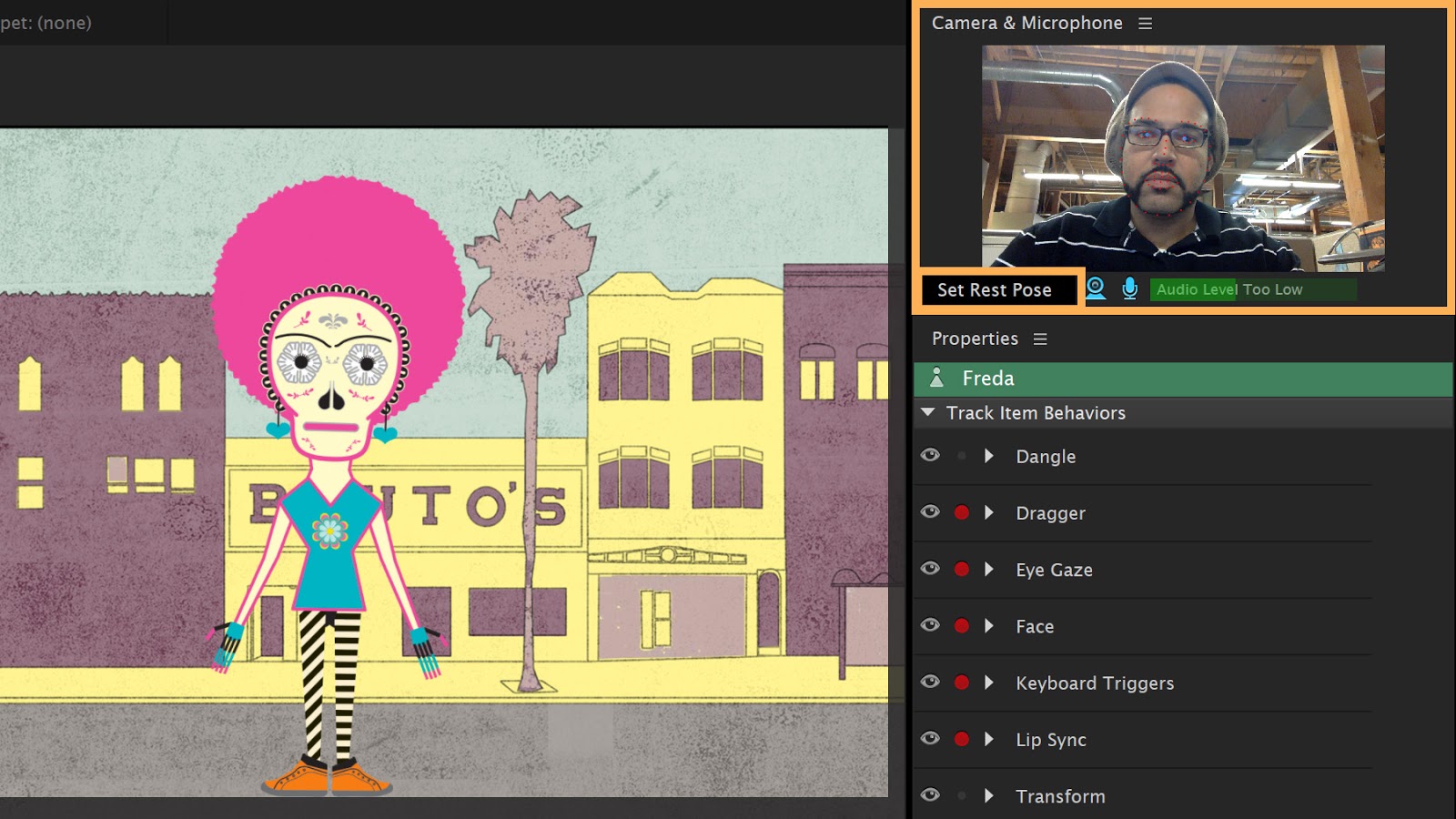

Poor lip syncing, on the other hand, like a bad language dub, can make or break the technique by ruining immersion. It allows the mouths of animated characters to move appropriately when speaking and mirror the performances of human actors. Live lip syncingĪ key aspect of live 2D animation is good lip syncing. Machine learning is allowing all of this to run even more smoothly and keep improving. The live animation technique uses facial detection software, cameras, recording equipment, and digital animation techniques to create a live animation that runs alongside a real acted scene. One recent example of the medium saw Stephen Colbert interviewing cartoon guests, including Donal Trump, on the Late Show.Īnother saw Archer's main character talking to an audience live and answering questions during a ComicCon panel event. The first-ever live cartoon, an episode of The Simpsons, was aired in 2016. It seems that Homer's was a prophetic vision as in 2019, l ive 2D animation is a powerful new medium whereby actors can act out a scene that will be animated at the very same time on screen. It’s a terrible strain on the animators’ wrists.” A voice actor jokingly replied: “very few cartoons are broadcast live.